Aviation accident analysis

Aviation accident analysis is performed to determine the cause of errors once an accident has happened. In the modern aviation industry, it is also used to analyze a database of past accidents in order to prevent an accident from happening. Many models have been used not only for the accident investigation but also for educational purpose.[1]

Per the Convention on International Civil Aviation, if an aircraft of a contracting State has an accident or incident in another contracting State, the State where the accident occurs will institute an inquiry. The Convention defines the rights and responsibilities of the states.

ICAO Annex 13—Aircraft Accident and Incident Investigation—defines which States may participate in an investigation, for example: the States of Occurrence, Registry, Operator, Design and Manufacture.[2]

Human factors

[edit]In the aviation industry, human error is the major cause of accidents. About 38% of 329 major airline crashes, 74% of 1627 commuter/air taxi crashes, and 85% of 27935 general aviation crashes were related to pilot error.[3] The Swiss cheese model is an accident causation model which analyzes the accident more from the human factor aspect.[4][5]

Reason's model

[edit]

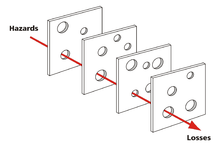

Reason's model, commonly referred to as the Swiss cheese model, was based on Reason's approach that all organizations should work together to ensure a safe and efficient operation.[1] From the pilot's perspective, in order to maintain a safe flight operation, all human and mechanical elements must co-operate effectively in the system. In Reason's model, the holes represent weakness or failure. These holes will not lead to accident directly, because of the existence of defense layers. However, once all the holes line up, an accident will occur.[6]

There are four layers in this model: organizational influences, unsafe supervision, precondition and unsafe acts.

- Organizational influences: This layer is about resources management, organizational climate and organizational process. For example, a crew underestimating the cost of maintenance will leave the airplane and equipment in bad condition.

- Unsafe supervision: This layer includes inadequate supervision, inappropriate operations, failure to correct a problem and supervisory violation. For example, if emergency procedure training is not provided to a new employee, it will increase the potential risk of a fatal accident.

- Unsafe action: Unsafe action is not the direct cause of accident. There are some preconditions that lead to unsafe actions; unstable mental state is one of the reasons for bad decisions.

- Error and violation: These are part of unsafe action. Error refers to an individual unable to perform a correct action to achieve an outcome. Violation involves the action of breaking a rule or regulation. All these four layers form the basic component of the Swiss cheese model and accident analysis can be performed by tracing all these factors.[1][7][8]

Investigation using Reason's model

[edit]Based on Reason's model, accident investigators analyze the accident from all four layers to determine the cause of the accident. There are two main types of failure investigators will focus on: active failure and latent failure.[9]

- Active failure is an unsafe act conducted by an individual that directly leads to accident. Investigators will identify pilot error first. Misconducting of emergency procedure, misunderstanding of instructions, failing to put proper flaps on landing, and ignoring in-flight warning system are few examples of active failure. The difference between active failure and latent failure is that the effect caused by active failure will show up immediately.

- Latent failure usually occurs from the high level management. Investigators may ignore this kind of failure because it may remain undetected for a long time.[10] During the investigation of latent failure, investigators have three levels to assess. The first is the factor that directly affects the operator's behavior: precondition (fatigue and illness). On February 12, 2009, Colgan Air Flight 3407, a Bombardier DHC-8-400 was on approach to Buffalo-Niagara International Airport. The pilots were experiencing fatigue and their inattentiveness cost the lives of everyone on board and one person on the ground when it crashed near the airport.[11] The investigation suggested that both pilots were tired and their conversation was not related to the flight operation, which indirectly caused the accident.[12][13] The second level investigator will track precursors of accidents related to latent threats. On June 1, 2009, Air France Flight 447 crashed into the Atlantic Ocean and 228 people on broad were killed. Analysis of the black box indicated that the airplane was controlled by an inexperienced first officer who lifted the nose too high and induced a stall.[14] Letting an inexperienced pilot fly the airplane by himself is one of the cases of unsafe supervision. The last area that latent failure investigation will assess is organizational failure. For example, an airline company decides to reduce the cost spent on pilot training. Those who lack of training will directly lead to the existence of inexperienced pilots.[15][16]

In order to fully understand the cause of the accident all those steps need to be performed. Investigation that is different from the causing of the accident, then it is necessary to investigate from backward of Reason's model.

Related reading

[edit]References

[edit]- ^ a b c Wiegmann, Douglas A (2003). A Human Error Approach to Aviation Accident Analysis: The Human Factors Analysis and Classification System (PDF). Ashgate Publishing Limited. Retrieved Oct 26, 2015.

- ^ ICAO fact sheet : Accident investigation (PDF). ICAO. 2016.

- ^ Li, Guohua (Feb 2001). "Factors associated with pilot error in aviation crashes". Aviation, Space, and Environmental Medicine. 72 (1): 52–58. PMID 11194994. Retrieved Oct 26, 2015.

- ^ P.M, Salmon (2012). "Systems-based analysis methods: a comparison of AcciMap, HFACS, and STAMP". Safety Science. 50: 1158–1170. doi:10.1016/j.ssci.2011.11.009. S2CID 205235420.

- ^ Underwood, Peter; Waterson, Patrick (2014-07-01). "Systems thinking, the Swiss Cheese Model and accident analysis: A comparative systemic analysis of the Grayrigg train derailment using the ATSB, AcciMap and STAMP models". Accident Analysis & Prevention. Systems thinking in workplace safety and health. 68: 75–94. doi:10.1016/j.aap.2013.07.027. PMID 23973170.

- ^ Roelen, A. L. C.; Lin, P. H.; Hale, A. R. (2011-01-01). "Accident models and organisational factors in air transport: The need for multi-method models". Safety Science. The gift of failure: New approaches to analyzing and learning from events and near-misses – Honoring the contributions of Bernhard Wilpert. 49 (1): 5–10. doi:10.1016/j.ssci.2010.01.022.

- ^ Qureshi, Zahid H (Jan 2008). "A Review of Accident Modelling Approaches for Complex Critical Sociotechnical Systems". Defense Science and Technology Organisation.

- ^ Mearns, Kathryn J.; Flin, Rhona (March 1999). "Assessing the state of organizational safety—culture or climate?". Current Psychology. 18 (1): 5–17. doi:10.1007/s12144-999-1013-3. S2CID 144275343.

- ^ Nielsen, K.J.; Rasmussen, K.; Glasscock, D.; Spangenberg, S. (March 2008). "Changes in safety climate and accidents at two identical manufacturing plants". Safety Science. 46 (3): 440–449. doi:10.1016/j.ssci.2007.05.009.

- ^ Reason, James (2000-03-18). "Human error: models and management". BMJ: British Medical Journal. 320 (7237): 768–770. doi:10.1136/bmj.320.7237.768. ISSN 0959-8138. PMC 1117770. PMID 10720363.

- ^ "Aviation Accident Report AAR-10-01". www.ntsb.gov. Retrieved 2015-10-26.

- ^ "A double tragedy: Colgan Air Flight 3407 – Air Facts Journal". Air Facts Journal. 2014-03-28. Retrieved 2015-10-26.

- ^ Caldwell, John A. (2012-04-01). "Crew Schedules, Sleep Deprivation, and Aviation Performance". Current Directions in Psychological Science. 21 (2): 85–89. doi:10.1177/0963721411435842. ISSN 0963-7214. S2CID 146585084.

- ^ Landrigan, L.C.; Wade, J.P.; Milewski, A.; Reagor, B. (2013-11-01). "Lessons from the past: Inaccurate credibility assessments made during crisis situations". 2013 IEEE International Conference on Technologies for Homeland Security (HST). pp. 754–759. doi:10.1109/THS.2013.6699098. ISBN 978-1-4799-1535-4. S2CID 16676097.

- ^ Haddad, Ziad S.; Park, Kyung-Won (23 June 2010). "Vertical profiling of tropical precipitation using passive microwave observations and its implications regarding the crash of Air France 447". Journal of Geophysical Research. 115 (D12): D12129. Bibcode:2010JGRD..11512129H. doi:10.1029/2009JD013380.

- ^ Reason, James (August 1995). "A systems approach to organizational error". Ergonomics. 38 (8): 1708–1721. doi:10.1080/00140139508925221.